|

11/11/2023 0 Comments Lasso regression stata

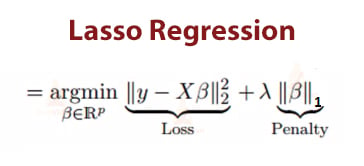

Generally, lasso might perform better in a situation where some of the predictors have large coefficients, and the remaining predictors have very small coefficients. However, neither ridge regression nor the lasso will universally dominate the other. One obvious advantage of lasso regression over ridge regression, is that it produces simpler and more interpretable models that incorporate only a reduced set of the predictors. This means that, lasso can be also seen as an alternative to the subset selection methods for performing variable selection in order to reduce the complexity of the model.Īs in ridge regression, selecting a good value of \(\lambda\) for the lasso is critical. In the case of lasso regression, the penalty has the effect of forcing some of the coefficient estimates, with a minor contribution to the model, to be exactly equal to zero. It shrinks the regression coefficients toward zero by penalizing the regression model with a penalty term called L1-norm, which is the sum of the absolute coefficients. Lasso stands for Least Absolute Shrinkage and Selection Operator. The lasso regression is an alternative that overcomes this drawback. Ridge regression shrinks the coefficients towards zero, but it will not set any of them exactly to zero. One disadvantage of the ridge regression is that, it will include all the predictors in the final model, unlike the stepwise regression methods (Chapter which will generally select models that involve a reduced set of variables.

One important advantage of the ridge regression, is that it still performs well, compared to the ordinary least square method (Chapter in a situation where you have a large multivariate data with the number of predictors (p) larger than the number of observations (n). The consequence of this is that, all standardized predictors will have a standard deviation of one allowing the final fit to not depend on the scale on which the predictors are measured. The standardization of a predictor x, can be achieved using the formula x' = x / sd(x), where sd(x) is the standard deviation of x. 2014), so that all the predictors are on the same scale. Therefore, it is better to standardize (i.e., scale) the predictors before applying the ridge regression (James et al. Note that, in contrast to the ordinary least square regression, ridge regression is highly affected by the scale of the predictors. However, as \(\lambda\) increases to infinite, the impact of the shrinkage penalty grows, and the ridge regression coefficients will get close zero. When \(\lambda = 0\), the penalty term has no effect, and ridge regression will produce the classical least square coefficients. Selecting a good value for \(\lambda\) is critical. The amount of the penalty can be fine-tuned using a constant called lambda ( \(\lambda\)). The shrinkage of the coefficients is achieved by penalizing the regression model with a penalty term called L2-norm, which is the sum of the squared coefficients. Ridge regression shrinks the regression coefficients, so that variables, with minor contribution to the outcome, have their coefficients close to zero. We’ll also provide practical examples in R. In this chapter we’ll describe the most commonly used penalized regression methods, including ridge regression, lasso regression and elastic net regression.

Note that, the shrinkage requires the selection of a tuning parameter (lambda) that determines the amount of shrinkage. This allows the less contributive variables to have a coefficient close to zero or equal zero. The consequence of imposing this penalty, is to reduce (i.e. shrink) the coefficient values towards zero. This is also known as shrinkage or regularization methods. The standard linear model (or the ordinary least squares method) performs poorly in a situation, where you have a large multivariate data set containing a number of variables superior to the number of samples.Ī better alternative is the penalized regression allowing to create a linear regression model that is penalized, for having too many variables in the model, by adding a constraint in the equation (James et al.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed